Stealthy website scanning thanks to archive.org

Mar 6, 2020 by Thibault Debatty | 3229 views

https://cylab.be/blog/62/stealthy-website-scanning-thanks-to-archiveorg

Scanning a website is an important step of the reconnaissance phase. Different tools, like BlackWidow, can automate the process. We present here another tool that allows to scan a website without leaving traces on the target servers : waybackurls.

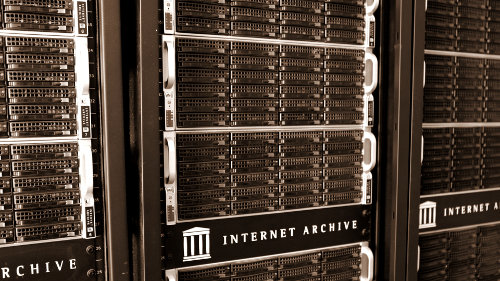

To achieve this, waybackurls actually queries the wayback machine from Internet Archive. This project keeps backups of over 418 billion web pages and offers a nice REST API.

For example, this query allows to list known URL’s from cylab.be :

http://web.archive.org/cdx/search/cdx?url=cylab.be/*&output=json

&fl=original&collapse=urlkey

Using waybackurls with Docker

The easiest way to use waybackurls is with docker:

$ docker pull cylab/waybackurls

You can then run waybackurls by feeding a list of domains on stdin :

$ echo "cylab.be" | docker run -i cylab/waybackurls

https://cylab.be/

https://cylab.be/css/app.css

http://cylab.be/favicon.ico

https://cylab.be/fonts/et-line.eot?26ec3c7d0366e0825d705c6e22

https://cylab.be/fonts/et-line.eot?26ec3c7d0366e0825d705c6e22?

https://cylab.be/fonts/et-line.svg?569bd9082c15cc30fa6e05626a

https://cylab.be/fonts/et-line.ttf?98126e3e1238b0f3b941ad285320

https://cylab.be/fonts/et-line.woff?b01ff252761958325faab1535c9

Manual installation and usage

Waybackurls is actually written in GO. So here is the way to install it manually and run it from your host machine:

$ go get github.com/tomnomnom/waybackurls

$ echo "cylab.be" | ./go/bin/waybackurls

This blog post is licensed under

CC BY-SA 4.0